ENGINEERING INFERENCE ENGINE

WHAT DOES IT DO?

The Engineering Inference Engine is an experimental forward-chaining inference engine. It is used for quick conversions and calculations all the way up to model-based design of space missions. Public models can be used via models.parkinresearch.com and via the ‘Models’ menu above.

You can save and share your solutions simply by copying the auto-generated URL into e-mails and reports.

FEATURES

Speed

- The Engineering Inference Engine is a super-fast forward chaining inference engine (written in C++) that specializes in propagating engineering quantities.

- Each input value generates a cascade of inferences. The engine calls the simplest and quickest functions first, while also respecting mathematical branches that change the solution procedure.

- Iterative solutions are automatically deduced and computational complexity is minimized by omitting inferences that do not affect the value(s) being solved for. This is important because needless repetition burns CPU time, especially in complex engineering designs where iterations can run 5 levels deep or more.

Scalability

- Model size is limited only by memory size.

- Data types are objects within the Engineering Inference Engine: they can be inherited and combined to make composite types. New data types are built from booleans, integers, doubles, complex doubles, vectors and matrices thereof, and quaternions. There are also character, string and struct types.

- The Engineering Inference Engine is generic: any problem that can be represented by known functions can be solved. Functions are black boxes; they are simply function handles with lists of parameters. The user manages the fundamental definition of the problem and decomposes it for the inference engine, and the engine then solves the problem by inferring which function handles to call in what sequence.

Reusability

- Models are object oriented. Objects and the sub-objects they are built from are addressable using dot notation e.g. Root.Sub.Coordinates.z.

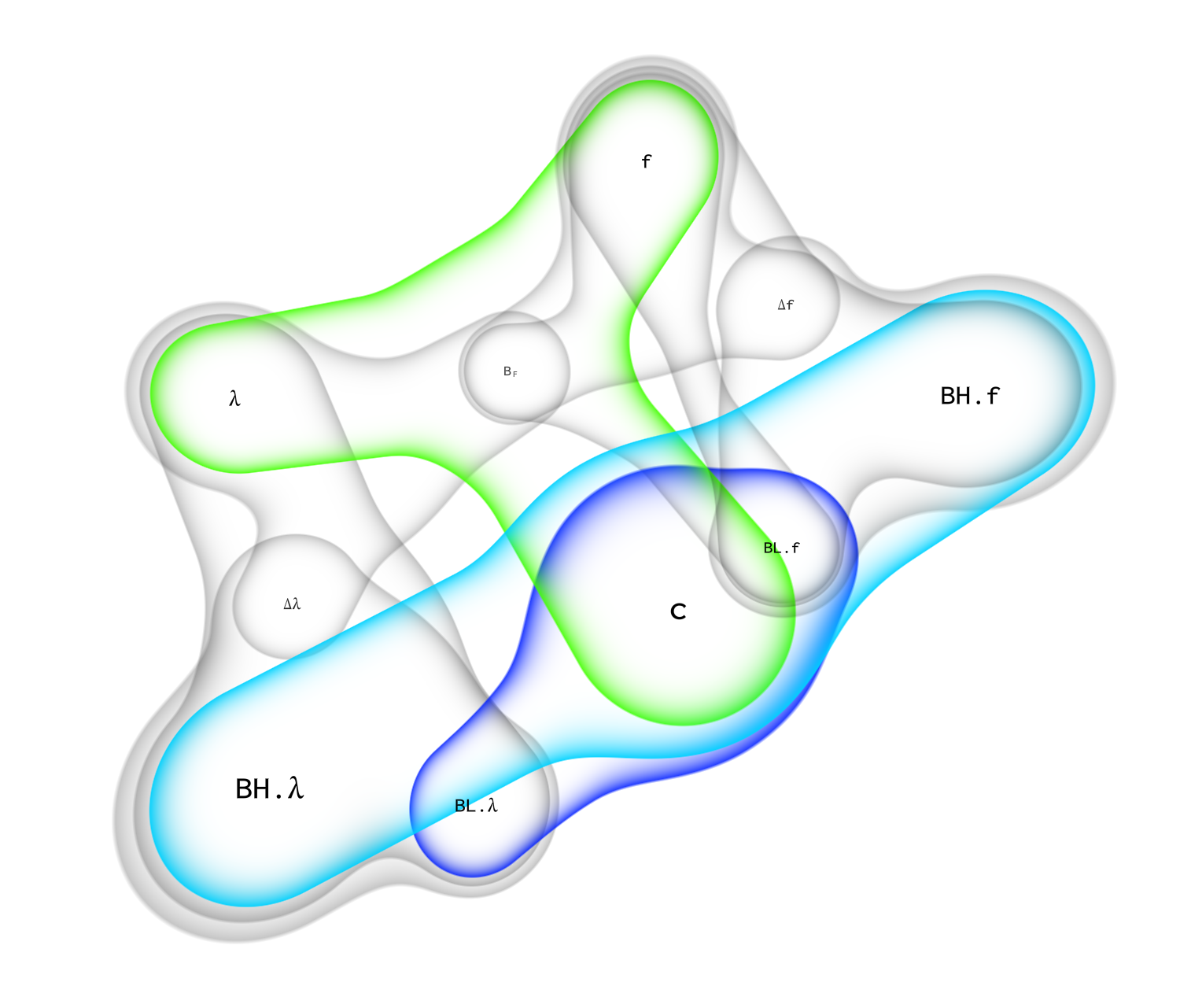

- Two models are combined by simply joining the variables that both models have in common. Solutions then propagate through the joined model.

- A standardized engineering/physics ontology mirrors the categorizations used in mathematics and engineering courses taught in schools and universities. For example, there are objects representing the thermodynamics of a simple compressible substance, the state of a fluid at rest, in motion, and within a duct. Each builds upon the last, forming a highly compact and reusable body of knowledge.

- Knowledge held within the Engineering Inference Engine is inherently more compact and reusable than conventional code.

WHY IS IT BETTER THAN TRADITIONAL ENGINEERING CODE?

Metacognition defines three types of knowledge: Declarative, procedural and conditional.

Traditional code is procedural and that is why it fails: it is an incomplete representation of the problem it solves, to the point where even minor changes to the inputs can force a comprehensive rewrite. Humans don’t work this way; we perceive problems declaratively.

Inference engines represent models as declarative and conditional knowledge, approaching an unchanging standard as the ontology becomes fully decomposed. Representing problems declaratively frees us from the endless cycle of writing and debugging subroutines, so the focus moves to understanding and drilling down to the essence of problems and exploring their solution space.